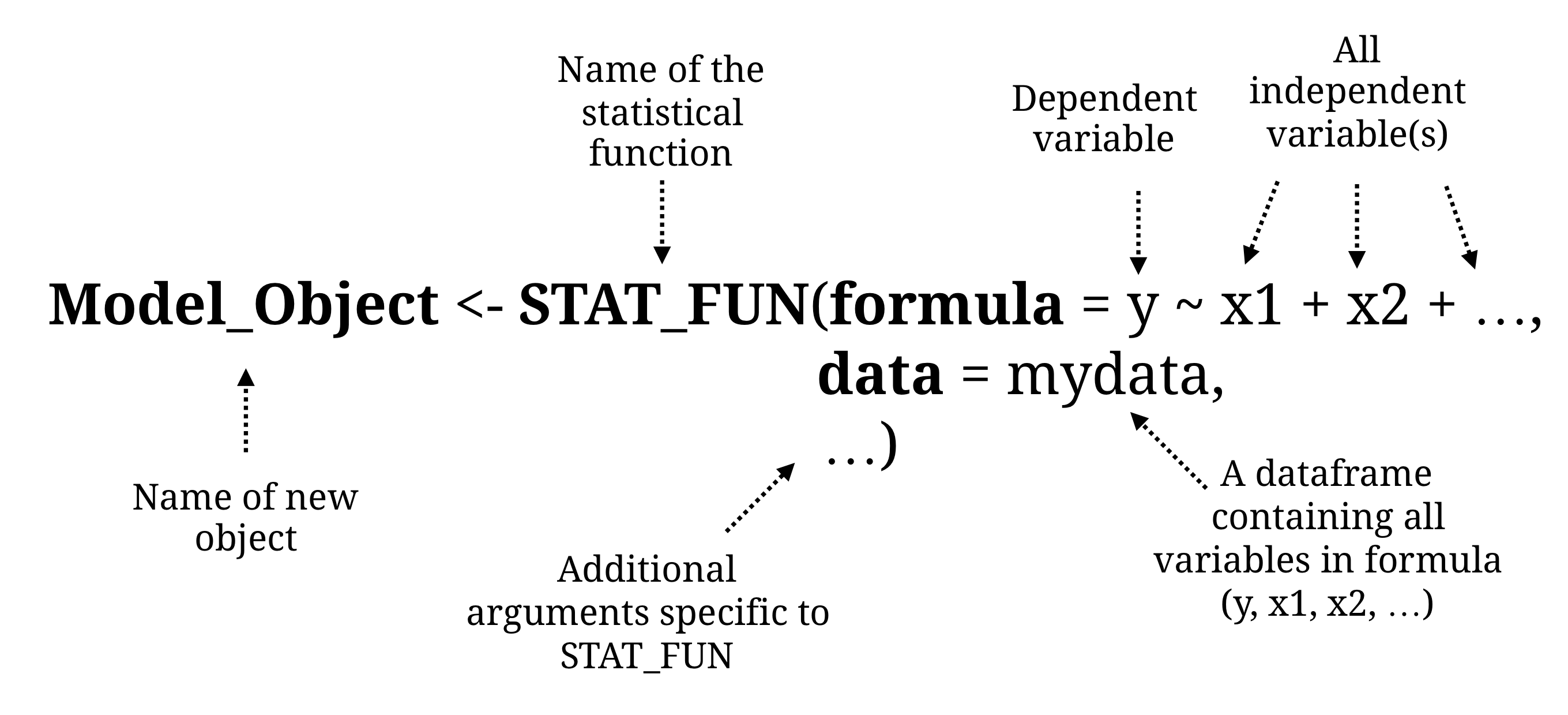

class: center, middle, inverse, title-slide # Statistics ### The R Bootcamp @ Erfurt<br/><a href='https://therbootcamp.github.io'>www.therbootcamp.com</a><br/><a href='https://twitter.com/therbootcamp'><span class="citation">@therbootcamp</span></a> ### June 2018 --- layout: true <div class="my-footer"><span> <a href="https://therbootcamp.github.io/"><font color="#7E7E7E">Erfurt, June 2018</font></a>                                           <a href="https://therbootcamp.github.io/"><font color="#646464">www.therbootcamp.com</font></a> </span></div> --- ## If you want to do statistics in R, there is a package for that! .pull-left5[ <br><br> | Package| Models| |------:|:----| | `stats`|Generalized linear model| | `afex`| Anovas| | `lme4`| Mixed effects regression| | `rpart`| Decision Trees| | `BayesFactor`| Bayesian statistics| | `igraph`| Network analysis| | `neuralnet`| Neural networks| | `MatchIt`| Matching and causal inference| | `survival`| Longitudinal survival analysis| | ...| Anything you can ever want!| ] .pull-right45[ <img src="https://raw.githubusercontent.com/therbootcamp/therbootcamp.github.io/master/_sessions/_image/statistical_procedures.png" width="100%" style="display: block; margin: auto;" /> ] --- .pull-left45[ ## We will cover... Basic structure and arguments of most statistical functions - `formula`, `data` Simple `htest` objects (t.test, correlation) Generalized linear model - `lm()`, `glm()`, `aov()` Common methods to explore statistical objects - `print()`, `summary()`, `names()`, `predict()`, `plot()` Dissect statistical objects with `$` Work with R's library of statistical distributions ] .pull-right5[ ```r # T-test comparing height based on gender t.test(formula = height ~ sex, data = baselers) # Regression model income_glm <- glm(formula = income ~ ., data = baselers %>% select(-id)) # Summary information summary(income_glm) # Dissect income_glm$coefficients # Acess coefficients income_glm$residuals # Access residuals ### Generate random data x1 <- rnorm(n = 100, mean = 10, sd = 5) x2 <- rnorm(n = 100, mean = 5, sd = 1) noise <- rnorm(n= 100, mean = 0, sd = 10) # Create y as a function of x1, x2, and noise y <- x1 + x2 + noise df <- data.frame(x1, x2, y) # Regress y on x1 and x2 lm(formula = y ~ ., data = df) ``` ] --- # Basic structure of statistical functions .pull-left4[ Statistical functions always require a dataframe of <b>data</b> |sex | age| height| weight| income| |:------|---:|------:|------:|------:| |male | 44| 174.3| 113.4| 6300| |male | 65| 180.3| 75.2| 10900| |female | 31| 168.3| 55.5| 5100| They also require a <b>formula</b> that specifies a dependent variable (y) as a function of one or more independent variables (x1, x2, ...) in the form: <font size = 6>formula = y ~ x1 + x2 +...</font> Note: The short hand formula = y ~ . means include <i>all</i> variables in the data ] .pull-right55[ To create a statistical object, apply the statistical function `STAT_FUN` with the `formula` and `data` arguments: <!-- --> ```r # Create regression object (my_glm) my_glm <- glm(formula = income ~ age + height, data = baselers) ``` ] --- .pull-left4[ ## Different tests have different arguments Individual tests may have many optional arguments (look at help menus!) Each of these have *default* values. If you don't specify them, the function will use the default. To customise a test, look at the help menu and specifying arguments directly. ### ?glm() <!-- --> ] .pull-right55[ Default vs. Customised Generalized linear model (GLM) ```r # GLM # Default glm(formula = income ~ age + education, data = baselers) # Customised glm(formula = eyecor ~ age + education, data = baselers, family = "binomial") # Logistic regression ``` Default vs. Customised t-test ```r # T-test # Default t.test(formula = age ~ sex, data = baselers) # Customised t.test(formula = age ~ sex, data = baselers, alternative = "less", # One sided test var.equal = TRUE) # Assume equal variance ``` ] --- .pull-left45[ ## Simple hypothesis tests All of the basic one and two sample hypothesis tests are included in the stats package | Hypothesis Test| R Function| |------:|----:| | T-test| `t.test()`| | Correlation Test| `cor.test()`| | Chi-Square Test| `chisq.test()`| These tests are unique because they can take either formula arguments, or individual vector arguments `x`, and `y` ] .pull-right5[ ### T-Test with t.test() ```r # 1-sample t-test t.test(x = baselers$age, mu = 40) # Mean under H0 ``` ``` ## ## One Sample t-test ## ## data: baselers$age ## t = 28, df = 10000, p-value <2e-16 ## alternative hypothesis: true mean is not equal to 40 ## 95 percent confidence interval: ## 44.29 44.93 ## sample estimates: ## mean of x ## 44.61 ``` ```r # 2-sample t-test t.test(formula = income ~ sex, data = baselers) # Note: Output hidden ``` ] --- .pull-left45[ ## Simple hypothesis tests All of the basic one and two sample hypothesis tests are included in the stats package | Hypothesis Test| R Function| |------:|----:| | T-test| `t.test()`| | Correlation Test| `cor.test()`| | Chi-Square Test| `chisq.test()`| These tests are unique because they can take either formula arguments, or individual vector arguments `x`, and `y` ] .pull-right5[ ### Correlation test with cor.test() ```r # Correlation test cor.test(x = baselers$age, y = baselers$income) ``` ``` ## ## Pearson's product-moment correlation ## ## data: baselers$age and baselers$income ## t = 180, df = 8500, p-value <2e-16 ## alternative hypothesis: true correlation is not equal to 0 ## 95 percent confidence interval: ## 0.8882 0.8968 ## sample estimates: ## cor ## 0.8926 ``` ```r # Version using formula (same result as above) cor.test(formula = ~ age + income, data = baselers) ``` ] --- ## Regression with glm(), lm() .pull-left35[ Part of the `baselers` dataframe: | food| income| happiness| |----:|------:|---------:| | 610| 6300| 5| | 1550| 10900| 7| | 720| 5100| 7| | 680| 4200| 7| | 260| 4000| 5| **Goal**: Create a regression model predicting how much money people spend on `food` as a function of `income` <br> <!-- `$$\Large food = \beta_{0} + \beta_{1} \times Inc + \beta_{1} \times Hap+ \epsilon$$` --> ] .pull-right6[ To conduct a regression analysis, use `glm()` or `lm()`. `lm()` is for simple linear regression, while `glm()` is generalized. Both are part of the `stats` package (already loaded in R) ```r # Create a glm object called food_glm # food (y) on income (x1) and happiness (x2) food_glm <- glm(formula = food ~ income + happiness, data = baselers) # food_glm has clas "glm" class(food_glm) ``` ``` ## [1] "glm" "lm" ``` We now have a "glm" object called `food_glm` that stores all of the results of our regression analysis. ] --- ## Customising formulas You can keep adding terms to formulas by "adding"" them with `+` ```r # Include multiple terms with + my_glm <- glm(formula = income ~ food + alcohol + happiness + hiking, data = baselers) ``` To include *all* variables in a dataframe, use the catch-all notation <b>`formula = y ~ .`</b> ```r # Use y ~ . to include ALL variables my_glm <- glm(formula = income ~ ., data = baselers) ``` To include *interaction terms* use `x1 * x2` instead of `x1 + x2` ```r # Include an interaction term between food and alcohol my_glm <- glm(formula = income ~ food * alcohol, data = baselers) ``` --- .pull-left35[ ## Exploring statistical objects Once you have created a statistical object, you can explore it using many *generic* functions such as `print()`, `summary()`, `predict()` and `plot()`. ```r # Create statistical object obj <- STAT_FUN(formula = ..., data = ...) names(obj) # Elements print(obj) # Print summary(obj) # Summary plot(obj) # Plotting predict(obj, ..) # Predict ``` They won't work for all objects, but it is a great idea to try each of them! ] .pull-right6[ <br> ```r # Create a glm object my_glm <- glm(formula = income ~ happiness + age, data = baselers) ``` Apply the `print()` function to a statistical object ```r # Print the object print(my_glm) ``` ``` ## ## Call: glm(formula = income ~ happiness + age, data = baselers) ## ## Coefficients: ## (Intercept) happiness age ## 1575 -100 149 ## ## Degrees of Freedom: 8509 Total (i.e. Null); 8507 Residual ## (1490 observations deleted due to missingness) ## Null Deviance: 6.33e+10 ## Residual Deviance: 1.28e+10 AIC: 145000 ``` ] --- .pull-left35[ ## Exploring statistical objects Once you have created a statistical object, you can explore it using many *generic* functions such as `print()`, `summary()`, `predict()` and `plot()`. ```r # Create statistical object obj <- STAT_FUN(formula = ..., data = ...) names(obj) # Elements print(obj) # Print summary(obj) # Summary plot(obj) # Plotting predict(obj, ..) # Predict ``` They won't work for all objects, but it is a great idea to try each of them! ] .pull-right6[ <br> ```r # Create a glm object my_glm <- glm(formula = income ~ happiness + age, data = baselers) ``` Apply the `summary()` function to a statistical object ```r # Print the object summary(my_glm) ``` ``` ## ## Call: ## glm(formula = income ~ happiness + age, data = baselers) ## ## Deviance Residuals: ## Min 1Q Median 3Q Max ## -4045 -835 3 814 4899 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 1575.497 94.363 16.70 < 2e-16 *** ## happiness -100.431 12.520 -8.02 1.2e-15 *** ## age 149.312 0.815 183.31 < 2e-16 *** ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## (Dispersion parameter for gaussian family taken to be 1501842) ## ## Null deviance: 6.3307e+10 on 8509 degrees of freedom ## Residual deviance: 1.2776e+10 on 8507 degrees of freedom ## (1490 observations deleted due to missingness) ## AIC: 145186 ## ## Number of Fisher Scoring iterations: 2 ``` ] --- .pull-left35[ ## Exploring statistical objects Once you have created a statistical object, you can explore it using many *generic* functions such as `print()`, `summary()`, `predict()` and `plot()`. ```r # Create statistical object obj <- STAT_FUN(formula = ..., data = ...) names(obj) # Elements print(obj) # Print summary(obj) # Summary plot(obj) # Plotting predict(obj, ..) # Predict ``` They won't work for all objects, but it is a great idea to try each of them! ] .pull-right6[ ### Statistical objects are lists! Explore them! Almost all statistical objects are *lists*. This means they all have named elements you can access with `$`. To see all of the named elements, use `names()` ```r # What are the named elements names(my_glm) ``` ``` ## [1] "coefficients" "residuals" "fitted.values" "effects" "R" ## [6] "rank" "qr" "family" "linear.predictors" "deviance" ## [11] "aic" "null.deviance" "iter" "weights" "prior.weights" ## [16] "df.residual" "df.null" "y" "converged" "boundary" ## [ reached getOption("max.print") -- omitted 11 entries ] ``` <img src="https://raw.githubusercontent.com/therbootcamp/Erfurt_2018June/master/_sessions/_image/list_tab.png" width="70%" style="display: block; margin: auto;" /> ] --- .pull-left35[ ## Exploring statistical objects Once you have created a statistical object, you can explore it using many *generic* functions such as `print()`, `summary()`, `predict()` and `plot()`. ```r # Create statistical object obj <- STAT_FUN(formula = ..., data = ...) names(obj) # Elements print(obj) # Print summary(obj) # Summary plot(obj) # Plotting predict(obj, ..) # Predict ``` They won't work for all objects, but it is a great idea to try each of them! ] .pull-right6[ ### Statistical objects are lists! Explore them! Almost all statistical objects are *lists*. This means they all have named elements you can access with `$`. To see all of the named elements, use `names()` ```r # Look at coefficients my_glm$coefficients ``` ``` ## (Intercept) happiness age ## 1575.5 -100.4 149.3 ``` ```r # First 5 fitted values my_glm$fitted.values[1:5] ``` ``` ## 1 2 3 4 5 ## 7643 10578 5501 4904 4657 ``` ```r # First 5 residuals my_glm$residuals[1:5] ``` ``` ## 1 2 3 4 5 ## -1343.1 322.2 -401.2 -703.9 -656.8 ``` ] --- .pull-left35[ ## predict() Many (but not all) statistical objects have an associated `predict()` method. `predict()` allows you to use the model to make predictions for new data, and understand how the model works. ```r lastyear ``` | id| age| fitness| tattoos| income| |--:|---:|-------:|-------:|------:| | 1| 44| 7| 6| 6300| | 2| 65| 8| 5| 10900| | 3| 31| 6| 3| 5100| ] .pull-right6[ Create model based on old data ```r # Create regression model predicting income mod <- lm(formula = income ~ age + fitness + tattoos, data = lastyear) mod$coefficients ``` ``` ## (Intercept) age fitness tattoos ## 994.1 146.8 75.1 -173.3 ``` ```r thisyear ``` | id| age| fitness| tattoos| |---:|---:|-------:|-------:| | 101| 21| 3| 4| | 102| 23| 6| 8| | 103| 26| 6| 3| ```r # Predict the income of people in thisyear predict(object = mod, newdata = thisyear)[1:3] ``` ``` ## 1 2 3 ## 3610 3436 4742 ``` ] --- .pull-left4[ ## broom::tidy() The `tidy()` function from the `broom` package allows you to convert the key results of *many* (like hundreds) of statistical object like "glm" to a data frame <img src="https://raw.githubusercontent.com/therbootcamp/Erfurt_2018June/master/_sessions/_image/broom_hex.png" width="70%" style="display: block; margin: auto;" /> ```r # Install the broom package install.packages("broom") ``` ] .pull-right55[ ```r # Load boom package library(broom) # Create a tidy dataframe from my_glm tidy(my_glm) ``` ``` ## term estimate std.error statistic p.value ## 1 (Intercept) 1575.5 94.3629 16.696 1.328e-61 ## 2 happiness -100.4 12.5197 -8.022 1.180e-15 ## 3 age 149.3 0.8145 183.315 0.000e+00 ``` The `tidy()` function works all kinds of common statistical objects, from classical regression to Bayesian models, to machine learning. ```r # rstan_example is a "stanfit" object tidy(rstan_example)[1:5,] ``` ``` ## term estimate std.error ## 1 mu 6.71421 5.9803 ## 2 tau 8.10885 7.4085 ## 3 eta[1] 0.47728 0.8941 ## 4 eta[2] 0.06064 0.8221 ## 5 eta[3] -0.22427 0.8589 ``` Try it on your favorite models and see the magic! ] --- .pull-left45[ ## Sampling Functions R gives you a host of functions for sampling data from common statistical distributions | Distribution| R Function| |------:|----:| | Normal| `rnorm()`| | Uniform|`runif()`| | Beta|`rbeta()`| | Binomial|`rbinom()`| You can use these to create simulations and play around with models You can use `sample()` to draw random samples from a vector of values ] .pull-right5[ <font size = 5>?distributions</font> <img src="https://raw.githubusercontent.com/therbootcamp/therbootcamp.github.io/master/_sessions/_image/distributions_help.png" width="90%" style="display: block; margin: auto;" /> ] --- .pull-left45[ ## Sample() Use `sample()` to draw a sample from a vector ```r # Simulate 10 flips of a fair coin coin_flips <- sample(x = c("H", "T"), size = 10, prob = c(.5, .5), replace = TRUE) coin_flips ``` ``` ## [1] "T" "T" "H" "H" "T" "H" "T" "H" "H" "H" ``` ```r # Table of counts table(coin_flips) ``` ``` ## coin_flips ## H T ## 6 4 ``` ```r # What proportion are heads? mean(coin_flips == "H") ``` ``` ## [1] 0.6 ``` ] .pull-right5[ ### Simulate the birthday problem <img src="https://raw.githubusercontent.com/therbootcamp/Erfurt_2018June/master/_sessions/_image/balloons.png" width="20%" style="display: block; margin: auto;" /> ```r # Create an empty room birthdays <- c() # While none of the birthdays are the same, # keep adding new ones while(all(!duplicated(birthdays))) { # Get new birthday new_day <- sample(x = 1:365, size = 1) # Add new_day to birthdays birthdays <- c(birthdays, new_day) } # Done! How many are in the room?? length(birthdays) ``` ``` ## [1] 20 ``` ] --- .pull-left5[ ### rnorm(), runif()... Use the `rnorm()`, `runif()`... functions to draw random samples from probability distributions ```r # Random sample from Normal distribution mysamp <- rnorm(n = 100, # Number of samples mean = 10, # Mean of pop sd = 5) # SD of pop ... mysamp[1:5] # First 5 values ``` ``` ## [1] 10.501 9.980 11.936 2.309 6.631 ``` ```r mean(mysamp) # Mean ``` ``` ## [1] 10.56 ``` ```r sd(mysamp) # Standard deviation ``` ``` ## [1] 4.513 ``` ] .pull-right45[ ```r ggplot(data = tibble(x = mysamp), aes(x = x, y = ..density..)) + geom_histogram(col = "white", bins = 10) + geom_density(col = "red", size = 1.5) + theme_minimal() ``` <img src="Statistics_files/figure-html/unnamed-chunk-47-1.png" width="100%" /> ] --- .pull-left45[ <br><br> ## Other great statistics packages <br> |package|Description| |:----|:-----| |`afex`|Factorial experiments| |`lmer`|Linear mixed effects models| |`BayesFactor`|Bayesian Models| |`forecast`|Time series| |`lavaan`|Latent variable and structural equation modelling| ] .pull-right5[ <img src="https://raw.githubusercontent.com/therbootcamp/Erfurt_2018June/master/_sessions/_image/lavaan_ss.jpg" style="display: block; margin: auto;" /> <img src="https://raw.githubusercontent.com/therbootcamp/Erfurt_2018June/master/_sessions/_image/BayesFactor_ss.png" style="display: block; margin: auto;" /> ] --- .pull-left45[ <br><br> ## Summary R has a package for every statistical procedure you can imagine. Most require a formula, data, and other optional arguments Use help menus to understand arguments and syntax! Once you've created a statistical object, use generic methods (`print()`, `names()`, `summary()`), to explore it Use random sampling functions to run simulations ] .pull-right5[ `?t.test` <img src="https://raw.githubusercontent.com/therbootcamp/therbootcamp.github.io/master/_sessions/_image/ttesthelp_ss.png" width="100%" style="display: block; margin: auto;" /> ] --- <br> ## Questions? <br><br> # [Statistics Practical](https://therbootcamp.github.io/Erfurt_2018June/_sessions/D1S3_Statistics/Statistics_practical.html)